There’s a common assumption in the restaurant tech world that “human + AI” must be better than “pure AI.” The logic goes: humans handle the exceptions, AI handles the routine, and together they’re more reliable than either alone. That logic made sense in 2018. It’s less true every year — and in the specific context of restaurant phone ordering in 2026, it’s worth challenging directly.

The hybrid model has a fundamental structural ceiling. At some point, the human in the loop stops being a safety net and starts being a bottleneck. When I was designing Tunvo’s AI voice architecture, we spent significant time with restaurant owners who had tried hybrid systems and kept hitting the same two problems: peak-hour call stacking (multiple customers waiting because agents were occupied) and inconsistency (different agents handling the same modifier request differently on different calls). Pure LLM AI solves both of these at the architectural level — not by being smarter than a human on any given call, but by being consistent and infinitely parallel across all calls.

This article is about the technical and operational case for pure LLM-based voice AI in restaurant ordering. We’ll look at why the hybrid model has real limitations, what LLM architecture does differently, and where the technology is heading.

Key Takeaways

- Hybrid models create capacity bottlenecks — human agents can’t handle unlimited simultaneous calls; AI can.

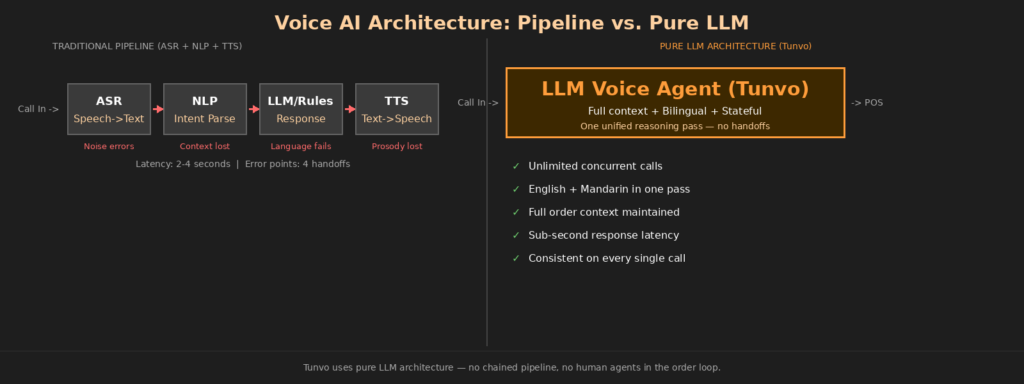

- Traditional voice AI pipelines (ASR + NLP + TTS) compound errors — each handoff between models loses information. LLMs handle the full conversation in one coherent pass.

- Restaurant ordering is structured enough for pure AI — the order space is finite and definable, unlike complex customer support scenarios.

- Cost economics favor pure AI decisively — AI voice costs are measured in cents per call; human labor in dollars.

- For Chinese restaurants specifically, LLM-based systems handle bilingual conversations naturally; hybrid models typically require separate language routing or differently trained agents.

The Structural Problem With Human + AI Hybrids

The hybrid model works like this: AI handles the front-end of the call — greeting, collecting information, routing — and a human agent steps in to close the order, handle unusual requests, or take over when the AI signals uncertainty. In theory, this captures the best of both.

In practice, you’re still paying for human labor on every call that escalates, and “escalation” can be triggered by anything from a genuinely complex order to a slightly unusual accent the AI didn’t parse correctly. Restaurant ordering generates a lot of minor escalations: a customer asks “can I get the lunch portion at dinner price” or uses a dish name the system hasn’t been specifically trained on. Each one routes to a human. Each one costs money and introduces the variability of different agents handling the same request differently.

More critically: AI voice’s primary operational advantage over human-staffed models is unlimited scalability — it can handle demand spikes without hiring extra staff. A hybrid model sacrifices that advantage the moment it routes calls to human agents. If your Friday dinner rush produces eight simultaneous calls and you have three agents available, five customers are waiting. The AI portion of the system is not helping anyone in that moment.

Why Traditional Voice AI Pipelines Fall Short

It’s important to distinguish between “pure AI” and “good AI.” Not all AI voice systems are equivalent. The earlier generation of restaurant voice AI — including systems built between 2015 and 2022 — used a chained pipeline: automatic speech recognition (ASR) converts speech to text, a natural language processing (NLP) model interprets the text, and a text-to-speech (TTS) engine generates the audio response.

This pipeline has two core weaknesses for restaurant use. First, errors compound: if ASR mishears a word — say, “shrimp” transcribed as “trim” — that error passes to the NLP model, which interprets corrupted input, which may generate an incorrect response. The chain has no self-correction mechanism at the transcription stage.

Second, context degrades: each model in the chain processes its slice of the conversation in isolation. The NLP model receives text, not audio. It can’t hear that the customer said “no garlic” with emphasis on “no.” It can’t pick up the hesitation that signals the customer isn’t sure about a modifier. Modern AI voice architecture research notes that this handoff chain “adds cumulative delays and loses prosody, tone, and speaker identity” — precisely the cues that make human order-takers good at catching ambiguous requests.

What LLM-Based Architecture Does Differently

A large language model handles the full conversation in a unified, context-aware pass. Instead of transcribing speech to text and then running that text through a separate reasoning model, an LLM-based system maintains the full conversation context — including the current order state, prior turns, and the structure of the menu — as a single coherent input to its reasoning.

This matters in restaurant ordering because orders are inherently stateful. A customer says “I want the number 14” — the AI needs to know whether that’s a lunch special or a dinner combination based on the time of day and the menu structure. They say “same as last time” — more complex. They say “actually, change the rice to noodles” mid-order — the AI needs to maintain the full order state and apply a partial modification without losing the rest.

Voice agent architecture research describes real-time LLM approaches as best suited for “AI concierges, live assistants in fast-paced environments.” A restaurant phone during dinner rush is exactly that environment — fast-paced, stateful, with constant interruptions and changes of direction that require maintaining context across the whole conversation.

Handling the Noisy Restaurant Scenario

One specific challenge for any voice AI in restaurant use is background noise — and this is a real objection that comes up in our product conversations regularly. Kitchens are loud. Customers often call from loud environments. Traditional ASR systems degrade significantly under noise because they’re optimized for clean speech conditions.

As Gladia’s research on speech recognition challenges describes, background noise, poor microphone quality, and telephony compression all impact ASR accuracy — and these are everyday conditions in the restaurant ordering context. Modern LLM-based systems handle this better through contextual inference: even if a word is partially obscured by noise, the model can infer the likely intent from the surrounding context of the conversation and the constrained domain of a restaurant menu.

The Bilingual Advantage

For Chinese restaurants specifically, LLM-based systems handle code-switching — when a speaker moves between English and Mandarin mid-sentence — in a way that traditional ASR + NLP pipelines struggle with. Traditional systems typically need language-specific models that are routed to when language is detected. Code-switching confuses the detection layer, producing routing errors. An LLM trained on multilingual data handles mixed-language input as a single context, without the routing step.

This isn’t a minor edge case in New York Chinese restaurants. Many regulars mix languages naturally: “I want the 糖醋里脊 and a Diet Coke” is a completely ordinary phone order from a second-generation Chinese-American customer. Pure LLM handles that sentence. Pipelines that separate English and Chinese processing often don’t.

Architecture Comparison at a Glance

| Dimension | Traditional Pipeline (ASR+NLP+TTS) | Pure LLM (Tunvo) | Human + AI Hybrid (Tarro model) |

|---|---|---|---|

| Error propagation | High — 4 handoff points | Low — single context | Low for escalated calls (human catches) |

| Peak call capacity | Unlimited (software) | Unlimited (software) | Capped by agent count |

| Bilingual handling | Requires language routing | Native — no routing needed | Depends on agent fluency |

| Response latency | 2–4 seconds | Sub-1 second | Human-speed (varies) |

| Cost per call | Low (software) | Low (software) | Higher (labor-based) |

| Consistency | High | High | Varies by agent |

| Handles edge cases | Struggles | Good (contextual inference) | Best (human judgment) |

The Cost Economics Are Not Close

The cost difference between AI voice and human-assisted models is substantial. Industry cost analysis puts AI voice at roughly $0.30–$0.50 per call versus $6–$7.68 per call for human call center agents — a 93–95% cost reduction. Even accounting for the fact that offshore agent models (like Tarro’s Philippines-based model, per Sacra research) cost significantly less than domestic labor, the gap between human labor and software compute is wide and widening as AI models improve.

For a takeout-focused Chinese restaurant handling 80–120 phone calls per day, the per-call difference adds up quickly. But the more important cost comparison isn’t per-call — it’s the structural cost at peak hours. During a two-hour dinner rush, you might receive 40% of your daily call volume. With a hybrid model, you’re either overstaffed at off-peak times or understaffed at peak times. With a pure AI system, there’s no staffing problem to optimize around. The AI handles however many calls arrive simultaneously, without overtime costs or missed calls.

Tunvo’s model reflects this: software-based pricing rather than per-agent labor pricing, with the same capacity available during a quiet Tuesday afternoon and a packed New Year’s Eve dinner rush.

Where the Hybrid Model Still Has an Argument

Being honest about this: there are scenarios where a human fallback genuinely adds value. Complaint handling, where a customer is upset about a previous order, benefits from human empathy in ways that are hard to replicate in AI. Edge cases involving highly unusual requests that fall completely outside the menu structure — “can I get the beef dish but with the sauce from the pork dish, and half-portions of both” — may benefit from a human who can exercise judgment about whether the kitchen can actually execute that.

The question is whether these edge cases justify the structural costs of maintaining a human-in-the-loop model for all calls. For most takeout-focused Chinese restaurants, the answer is probably no. The vast majority of calls are standard orders with standard modifications — spice level, protein substitution, add-ons, quantity changes. These are finite, well-defined, and very handleable by pure AI.

Tunvo’s system allows call routing to your staff for genuinely exceptional situations. The difference from a hybrid model is that this is the rare exception, not the default path.

The Trajectory: Where AI Is Going

The trend in voice AI since 2022 has been unmistakably toward greater LLM integration and lower latency — with AI voice ordering platforms increasingly demonstrating that pure AI can achieve the accuracy and naturalism that hybrid models used to claim as their exclusive advantage.

For restaurant owners evaluating their options now, this creates a real decision: build your operation on the infrastructure of the previous generation (human + AI hybrid), or on the infrastructure of the current and next generation (pure LLM AI). The hybrid model was the right answer in 2015 when AI accuracy wasn’t sufficient to handle restaurant ordering independently. In 2026, the AI is good enough — and the economics of the pure AI model are compelling enough — that the hybrid model’s advantages have significantly narrowed.

What This Means for a Chinese Restaurant Owner Specifically

When I think about the New York Chinese restaurant owner evaluating phone ordering solutions, the relevant questions are: Can the system handle Mandarin? Can it handle simultaneous peak calls without dropping any? Does it connect directly to my POS so my kitchen team sees clean tickets? And what am I actually paying for?

Tunvo’s answer to all four is straightforward. The system handles both English and Mandarin natively. It runs unlimited simultaneous calls. It integrates directly with MenuSifu — the POS built specifically for Chinese restaurants. And the pricing reflects software costs, not labor costs.

The hybrid model’s best argument — the human safety net — is meaningful in theory and less meaningful in practice for the types of orders Chinese takeout restaurants typically handle. The pure LLM model’s advantages — scale, consistency, language flexibility, and economics — are structural and compound over time.

Frequently Asked Questions

If LLM AI is better, why do hybrid models like Tarro still exist?

Because they were built when pure AI wasn’t reliable enough for restaurant ordering, and they’ve built significant customer bases on that foundation. Switching costs are real — restaurants that have adapted their operations to a hybrid model have to re-adapt. The hybrid model’s human safety net also genuinely reassures some restaurant owners, even if it’s statistically unnecessary for most calls. Market transitions in technology rarely happen as fast as the technology itself improves.

What happens when Tunvo’s AI encounters something it genuinely can’t handle?

The system is configured with a graceful fallback: it can route the call to your staff, noting the situation. This happens rarely in practice for standard restaurant ordering scenarios — the order space is finite and the AI has comprehensive coverage of typical modifier patterns. When it does happen, the caller doesn’t get dropped; they get a warm transfer to your team.

Is LLM voice AI reliable enough for production use in a real restaurant?

Yes — the technology has crossed into production-readiness for restaurant ordering. Tunvo reports 95%+ order accuracy, which is consistent with what the broader industry sees from well-implemented AI voice ordering systems. The key is the specificity of training: a well-tuned restaurant AI that knows your menu deeply will outperform a general-purpose AI on restaurant-specific tasks.

How long does it take for Tunvo to learn my menu?

Setup takes approximately 30 minutes. During onboarding, you configure your menu in the system — items, modifiers, prices, variants. The AI’s menu comprehension isn’t learning in real time; it’s grounded in the structured menu data you provide. Updates to your menu are reflected in the system quickly. You can try it in a 15-day free trial with your actual menu to see how it performs on your specific items before committing.

The technology has caught up to the promise. Pure LLM AI handles restaurant phone orders at scale, in multiple languages, at a fraction of the cost of any human-assisted model. Tunvo’s AI voice agent is built on this foundation — designed for Chinese restaurants in New York, integrated with MenuSifu, and set up in 30 minutes.